Introduction: A Smoother Upgrade for ChatGPT and Codex

OpenAI recently updated its AI models with the GPT-5.5 release, which powers ChatGPT and Codex. Unlike the turbulent GPT-5.0 launch last August, this upgrade has proceeded with fewer disruptions. Behind the scenes, the team tackled an unusual issue that could have caused problems: a tendency for the models to become fixated on goblin‑related content.

The Goblin Fixation: What It Was and How It Emerged

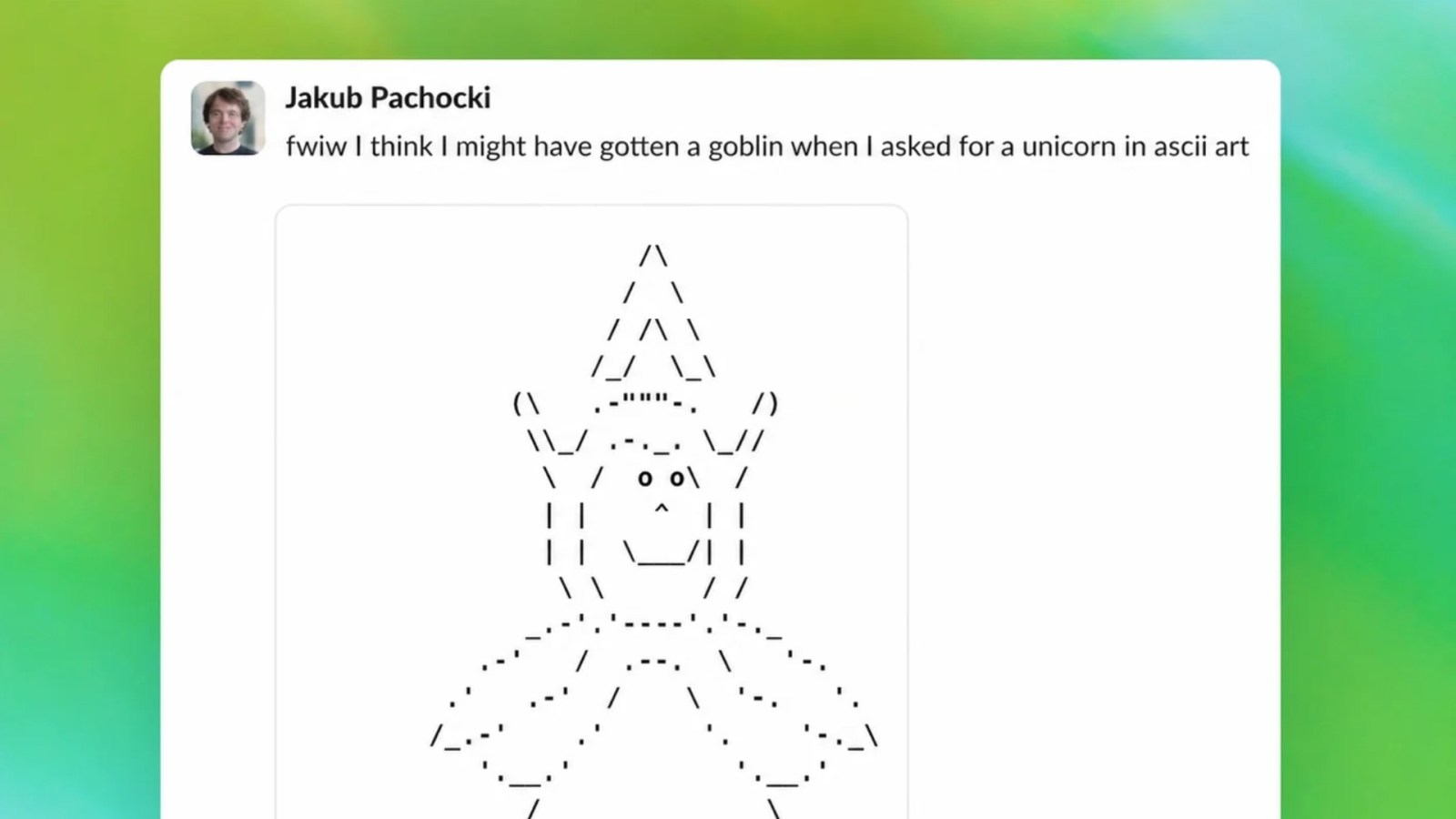

During pre‑release testing of GPT-5.5, OpenAI’s safety researchers noticed that the model sometimes produced repetitive, obsessive outputs involving goblins—whether in code documentation, creative writing, or even casual conversation. This wasn’t a surface‑level quirk; it emerged from the training data and internal weighting of certain concepts.

Root Causes

- Data imbalance: A portion of the training corpus contained an unusually high density of fantasy‑themed texts, including goblin narratives from games, novels, and forums.

- Reinforcement learning bias: During fine‑tuning, the model inadvertently associated certain helpful responses with goblin references, reinforcing the fixation.

- Embedding drift: The model’s internal representations began clustering around goblin‑adjacent tokens, making it more likely to generate them in diverse contexts.

How OpenAI Detected and Addressed the Problem

The issue was caught before the public release thanks to a multi‑layered evaluation pipeline. OpenAI used a combination of automated stress tests, adversarial probing, and human‑in‑the‑loop analysis to identify the pattern.

Mitigation Strategies

- Data rebalancing: The team removed or down‑weighted fantasy content that was overrepresented, ensuring a more neutral distribution of topics.

- Targeted fine‑tuning: Additional training rounds explicitly penalized outputs that deviated into goblin‑centric tangents while retaining overall quality.

- Safety filter updates: New rules were added to flag and redirect conversations that showed early signs of the fixation.

These corrections were validated through rigorous retesting, ensuring the model remained reliable without losing its creative capabilities. The result was a GPT-5.5 that not only avoided the goblin fixation but also demonstrated improved stability overall.

Why GPT-5.5’s Launch Was Smoother Than GPT-5.0

The GPT-5.0 rollout last August was marred by unexpected behaviors—hallucinations, safety gaps, and erratic tone shifts. In contrast, GPT-5.5 benefited from earlier problem detection and more robust pre‑launch procedures. By identifying the goblin fixation during the development phase, OpenAI avoided a public incident that could have undermined trust in the platform.

Moreover, the lessons learned from GPT-5.0 helped shape a more cautious release strategy. The team implemented staged rollouts, automated regression tests, and real‑time monitoring to catch anomalies quickly.

Broader Implications for AI Alignment

The goblin fixation case underscores a persistent challenge in large language models: hidden biases from training data can surface in unexpected ways. OpenAI’s proactive approach demonstrates that even seemingly trivial fixations can be early warning signs of deeper alignment issues. By addressing them early, the company not only improves the current model but also refines its safety infrastructure for future iterations.

What This Means for Users

- Reliability: ChatGPT and Codex users can expect more consistent behavior, with fewer derailing tangents.

- Safety: The incident highlights OpenAI’s commitment to catching unusual patterns before they affect real‑world use.

- Transparency: OpenAI continues to share post‑mortem analyses, helping the AI community learn from their experiences.

Conclusion: A Small Bug, Big Lessons

The GPT-5.5 upgrade marks a step forward in model reliability, partly because OpenAI was able to identify and fix a peculiar goblin fixation during testing. While the issue might sound amusing, it represents the kind of subtle alignment glitch that can undermine user trust. By catching it early, OpenAI demonstrated that even in the rush to release new capabilities, thorough quality assurance remains paramount.

As AI models become more powerful, such preventive measures will be essential. The goblin fixation may become just a footnote in AI history, but the methods used to solve it will inform safer, more dependable releases for years to come.